-

Technology adoption metrics can be a false economy

They get bandied about and poured over as if they were the ultimate truth. They are just a proxy for value. They are a precursor to it, no doubt, i.e. you need to have adoption before you can get to value outcomes. But they are not the end goal. They don’t provide leading indicators that…

-

Diary of an Innovation Junkie

I originally wrote this post on LinkedIn under the guise of Accidental Intrapreneur. I’m bringing it over here after reflecting that innovation is really at the heart of my activities, hence the title change. This post also brings things up to date and documents my dalliances with innovation since forever. A few other semantic pointers.…

-

Customer success operations – some answers

I was asked by Brook Perry from ’nuffsaid if I would be interested in contributing to an article she was working on with others to get feedback on a set of questions covering customer success operations. Being close to my heart I agreed. I’ll update this post with a link to the article once it…

-

How to measure value for customers

This is a worthy challenge I’ve grappled with before, just check out my posts under the metrics tag. The other day I got my hands on a recently published Forrester report with the same title as this post. I cannot share the report for obvious reasons but this is my review of the highlights of…

-

The new customer success partnerships

Customer Success teams in SaaS companies (mostly what I am focusing on here) probably like to think they are the spearhead for making customers successful (as the name suggests). In truth, its not that simple (is it ever). First a little context on the two DanelDoodle’s I shared in this post. I use the Paper…

-

Metrics that matter in Customer Success

I had an interesting conversation on LinkedIn the other day. It was based on a retrospective view of the customer success profession which I had written about on the State of Customer Success in 2018 after attending a conference. I captured the essence of the conversation in a DanelDoodle and discuss briefly here.

-

What good data looks like

This last week I attended a meetup and workshop in London organised by Customer Success Network, a European based not-for-profit community for customer success managers. It had the same title as this post. An excellent session which started off with a few minutes of talking by Dan Steinman, GM Gainsight EMEA. I then facilitated one of the…

-

Measuring customer success one advocate at a time

Creating customer advocates is a measure of customer success by some. Not enough though, in my view. I have a view because I’ve helped create a fair share of advocates. Just recently three of my customers got up on stage and spoke at a large event to other customers and prospects. I’ve also had the…

-

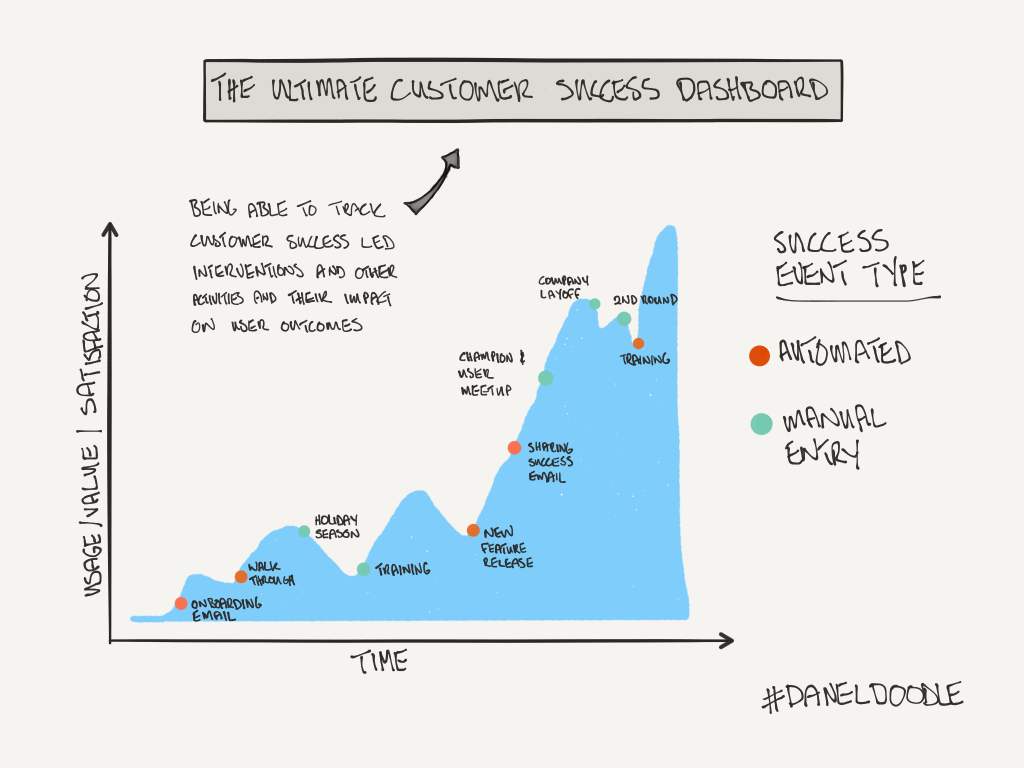

Metrics that matter and the ideal customer success dashboard

One chapter of my new eBook / trend report is going to cover metrics. What you track and how you track it. There is much written about the metrics themselves and I wasn’t yet ready to delve into that. I doodled what I thought was largely the ideal dashboard I could have in front of…

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.